Develop GenAI applications with confidence using

W&B Weave

Building demos of Generative AI applications is deceptively easy; getting them into production (and maintaining their high quality) is not. W&B Weave is here to help developers build and iterate on their AI applications with confidence.

Create rigorous apples-to-apples evaluations to score the behavior of any aspect of your app. Examine and debug failures by easily inspecting inputs and outputs. Deliver continuously robust performance of AI applications in production.

Built for today’s software developers

Engineers iterating on Generative AI apps require tools that are built for applying dynamic, non-deterministic models. Weave is designed with the developer experience in mind, providing capabilities for straightforward evaluation and debugging of GenAI applications.

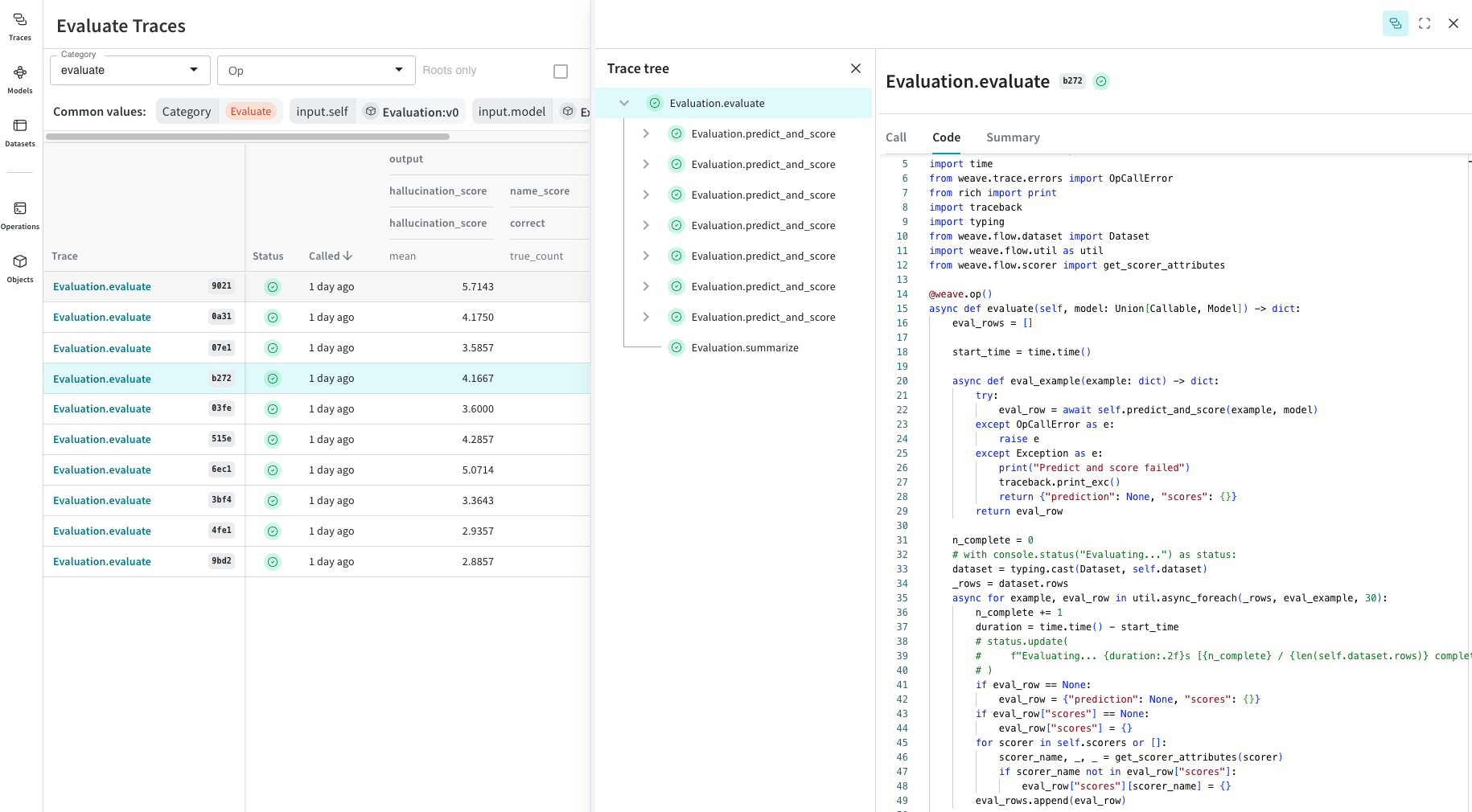

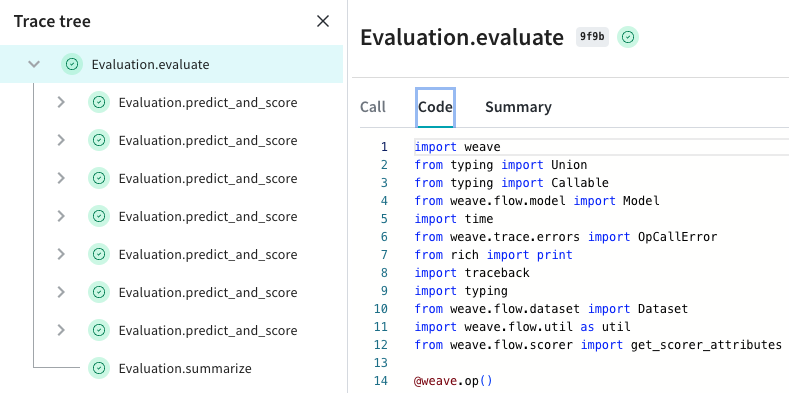

Log and debug inputs, outputs and traces

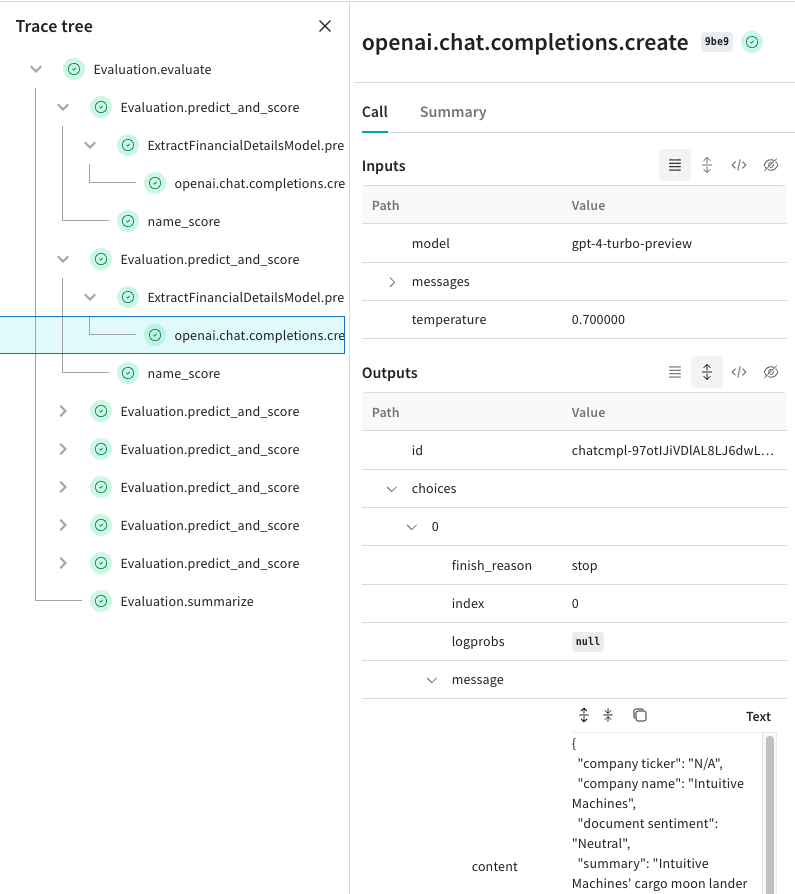

Weave automatically captures all input and output data and builds a tree to help you understand how data flows through your application. Drill into failure modes to find hallucinations or malformed responses, and analyze how different inputs affect document retrieval, tool use or any custom behaviors from your LLM application.

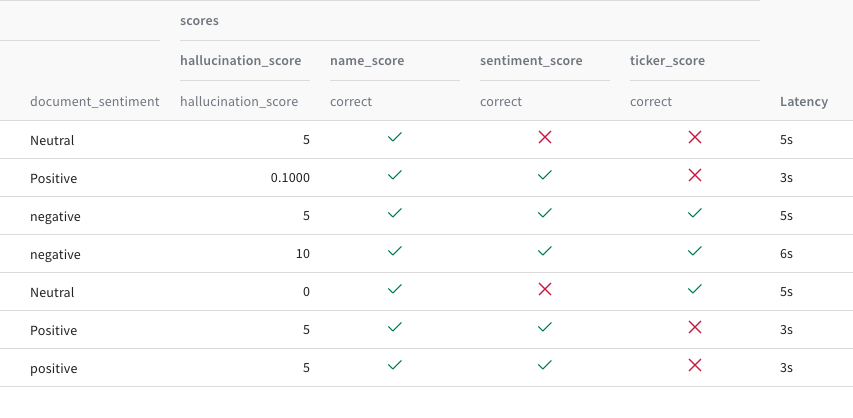

Evaluate and analyze the performance of LLMs

Compare different evaluations of model results against different dimensions of performance to ensure applications are as robust as possible when deploying to production. Drill into details to analyze exactly where and what it got it right and where it can improve. Review inputs and outputs of every call to your model to make tweaks and constantly produce richer outputs.

The Weights & Biases platform helps you streamline your workflow from end to end

Models

Experiments

Track and visualize your ML experiments

Sweeps

Optimize your hyperparameters

Model Registry

Register and manage your ML models

Automations

Trigger workflows automatically

Launch

Package and run your ML workflow jobs

Weave

Traces

Explore and

debug LLMs

Evaluations

Rigorous evaluations of GenAI applications